When dealing with application performance problems, the quick and easy solution is often to throw hardware at the problem. Typically this is one out of processing power (CPU), memory, or more I/O resources. With a bit of luck, the bottleneck is removed and shifts somewhere else (but now the total stack is – hopefully – capable of handling more workload).

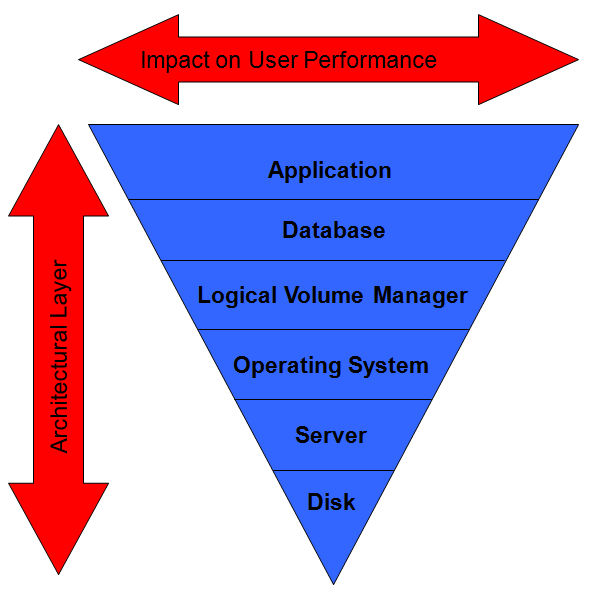

A classic picture in performance theory is the one showing the architectural layers of an application stack and where the largest impact can be achieved by tuning. The effort required is more or less reverse proportional. So to drive more performance out of an application by tuning the application code itself, the most effect can be achieved but also requires the most effort. Tuning the database requires a bit less effort but also achieves less result.

The picture is dramatically over-simplified and ignores a lot of subtleties. But you get the idea.

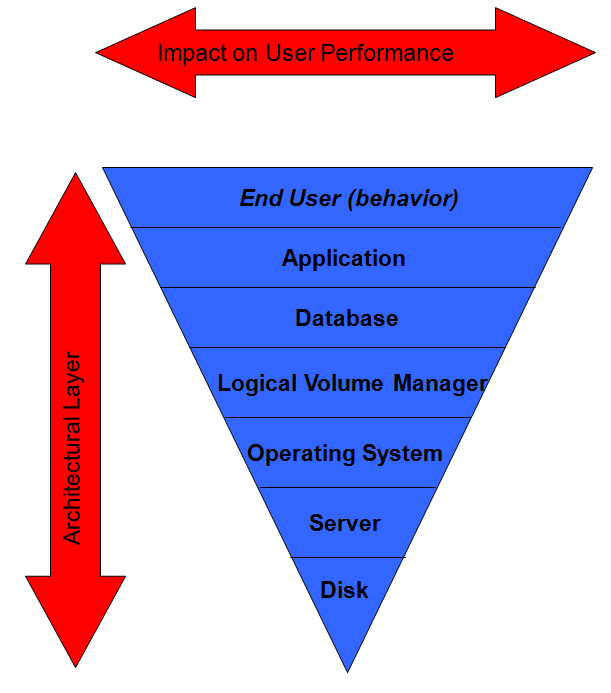

From my own experience however, I thought we are missing something here. Above the database and application layer, there is another infrastructure component that has even more effect on application performance than all other layers combined. The problem is, it’s not visible with the classic IT tools, there are no known performance sizing strategies available and some IT people seem to believe the extra layer does not even exist…

The end user.

Or better, end user behaviour.

My statement:

By influencing the user behavior we can sometimes eliminate performance problems without touching the other layers.

Users are people, therefore notoriously unpredictable. Unlike computers they have feelings, egos, can be tired, impatient, nervous, etc. Some are smart (and can “outsmart” the system) and others are not so smart (and we all know, one fool can cause more damage than ten smart programmers could ever prevent). They will not change their behavior due to what computers tell them. Or???

An example.

In my old days I was managing a database server running financial transaction processing. The system also allowed reports through a simple forms front-end. In a request box, the user could enter a search keyword to allow the database to limit its search to only records containing the keyword (or date range, or whatever). The field was blank by default. If the user just started the search without entering anything, it would trigger a full table scan which could take several minutes. The front-end application was blocked until the query was complete so the user could do nothing else in the meantime. Some users got impatient and aborted their front-end application – only to restart it and fire off another heavy query at the same time. It took the database about 10 minutes to time out and cancel the user’s connection – until then it was trying like crazy to get data from the system that – obviously – would never make it to the user anyway. You can imagine that if a few users did this at the same time, the database could be slowed down to the point that even the transaction processing suffered heavily.

Our solution?

Force the users to enter a search keyword, time range, or whatever. If they really wanted to search for everything, they had to enter another forms page a few levels deeper. The default page would simply not allow starting the search if the search field was left empty.

Problem solved. Application code changes? Hardly any (slight modification of the front-end forms layout).

You can imagine that in most organizations, users generate unnecessary workload on IT systems. They create reports, fire off print jobs, repeatedly download the same data to their Excel or Access databases and so on. If you can find out how they behave and gently influence them to do it different, it might save a lot of performance tuning efforts and even investments in new hardware.

And in some cases, the users themselves will work more efficiently, too.

![]()